Content Moderation Dashboard

At Hive, I worked with PMs, SWEs and Product Designers to ship V1 of one of Hive’s flagship products, Hive Moderation Dashboard.

What is Hive Moderation?

Hive is an AI data labeling company specializing in training AI/ML models for automated content moderation. This project aimed to develop a web dashboard that allows businesses to monitor content moderation on their platforms and adjust the AI model’s behavior when necessary. Hive’s AI model was trained to detect various harmful content types, examples being images and videos of gore, illicit substances as well as text and audio of offensive language, spam, and bullying and more.

Previous API Delivery Model:

Before the development of the Content Moderation Dashboard, Hive’s clients integrated the Moderation AI directly through Hive’s API.

This setup enabled Hive's AI to detect, flag, and remove unsuitable content within the client’s application.

However, without a centralized interface, moderation adjustments and insights into flagged content required more technical interaction, limiting the ease and accessibility of fine-tuning the AI’s performance.

Project goals

Conduct research to learn how our clients use our APIs to build a better experience

Build a centralized platform to allow clients a code-free experience in viewing their live content moderation

Allow for easy customization of moderation rules and thresholds to client’s content policies and business needs

Design additional product features e.g. user insights, bot management for clients that lack technical expertise or bandwidth to create these products themselves

My team

My role involved discovery, user research, wire-framing, hi-fidelity designs, prototyping, user testing, dev hand-off

In collaboration with 1 Product Designer, PM, SWE team

Gathering insights

My team and I took time to discover & conduct user research to learn how our clients were using or building product solutions with our APIs to moderate their content. We conducted interviews and studies with clients to gain insights into current workflows, needs and pain points. Further looking into market research/ product landscape, we were able to identify our product goals to best suit our client needs.

Here are examples of makeshift client solutions to monitor platform content moderation.

User personas: understanding our audience

From user research, we were able to consolidate our user needs, goals and pain points into two main target user personas to represent the key characteristics, needs, and motivations of the intended users.

Community guidelines moderator

✅ Wants to monitor/ view content moderation on application in real-time

✅ Review and moderate content in-person

❌ Does not have technical knowledge to access code/ review API results

❌ Unable to take action without technical support

Brand safety engineer

✅ Wants to customize API settings to fit our application’s guidelines/ needs

✅ Streamline my workflows between content moderation and platform actions (e.g. user restrictions, bans)

❌ Low bandwidth for a large time commitment to adjust and customize API to our business needs

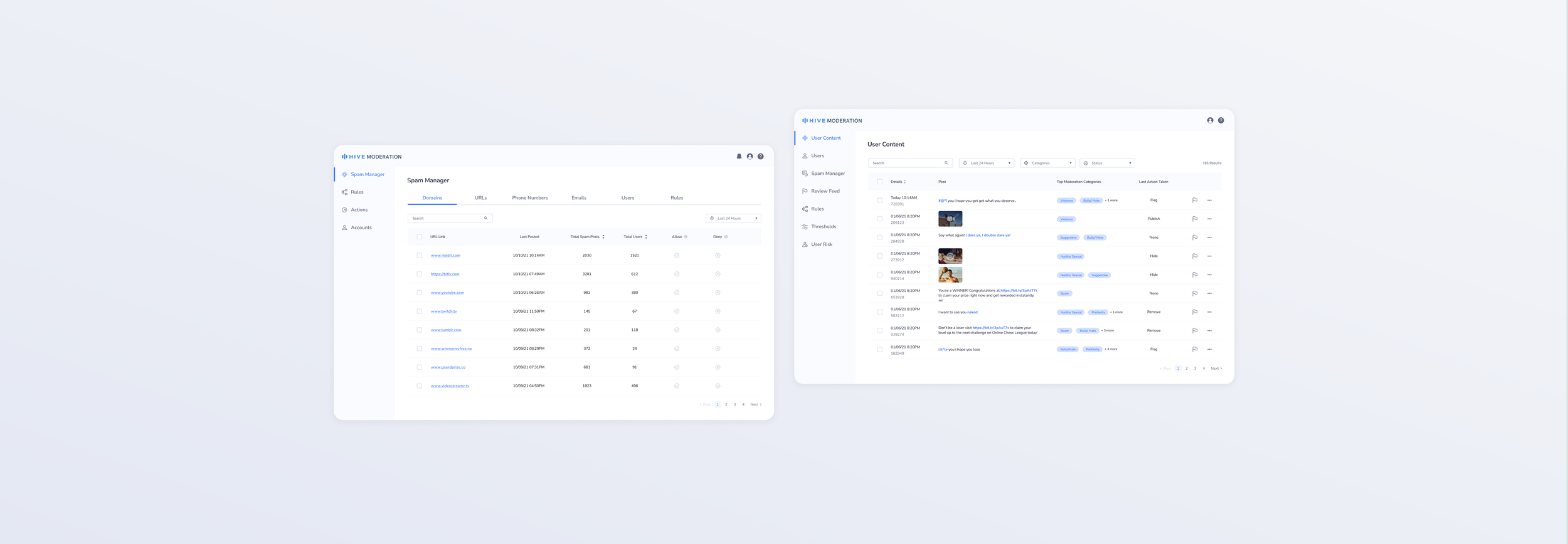

Product scope

Our team started with a focused V1, prioritizing text-based content flagged as “spam” by the AI model. This approach allowed us to test the moderation system on a specific content type before expanding to other media. The dashboard displayed live data of text flagged as spam, allowing platform admins to review the AI’s classifications and either confirm or correct its decisions. A dedicated settings page also allowed technical admins to create custom rules, such as banning users who post excessive spam. After the initial MVP product release our team will test and continue to iterate based on customer feedback and success metrics.

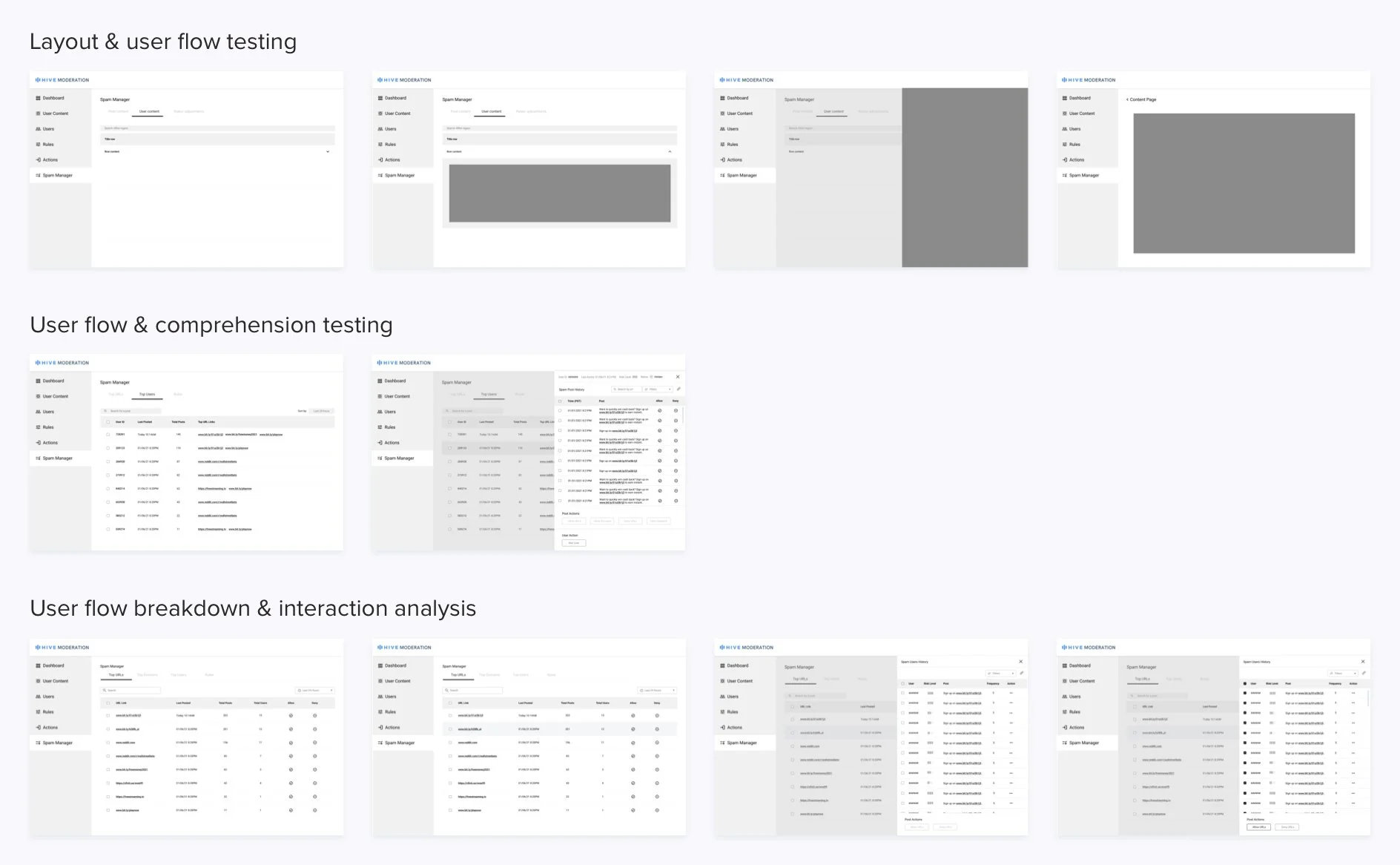

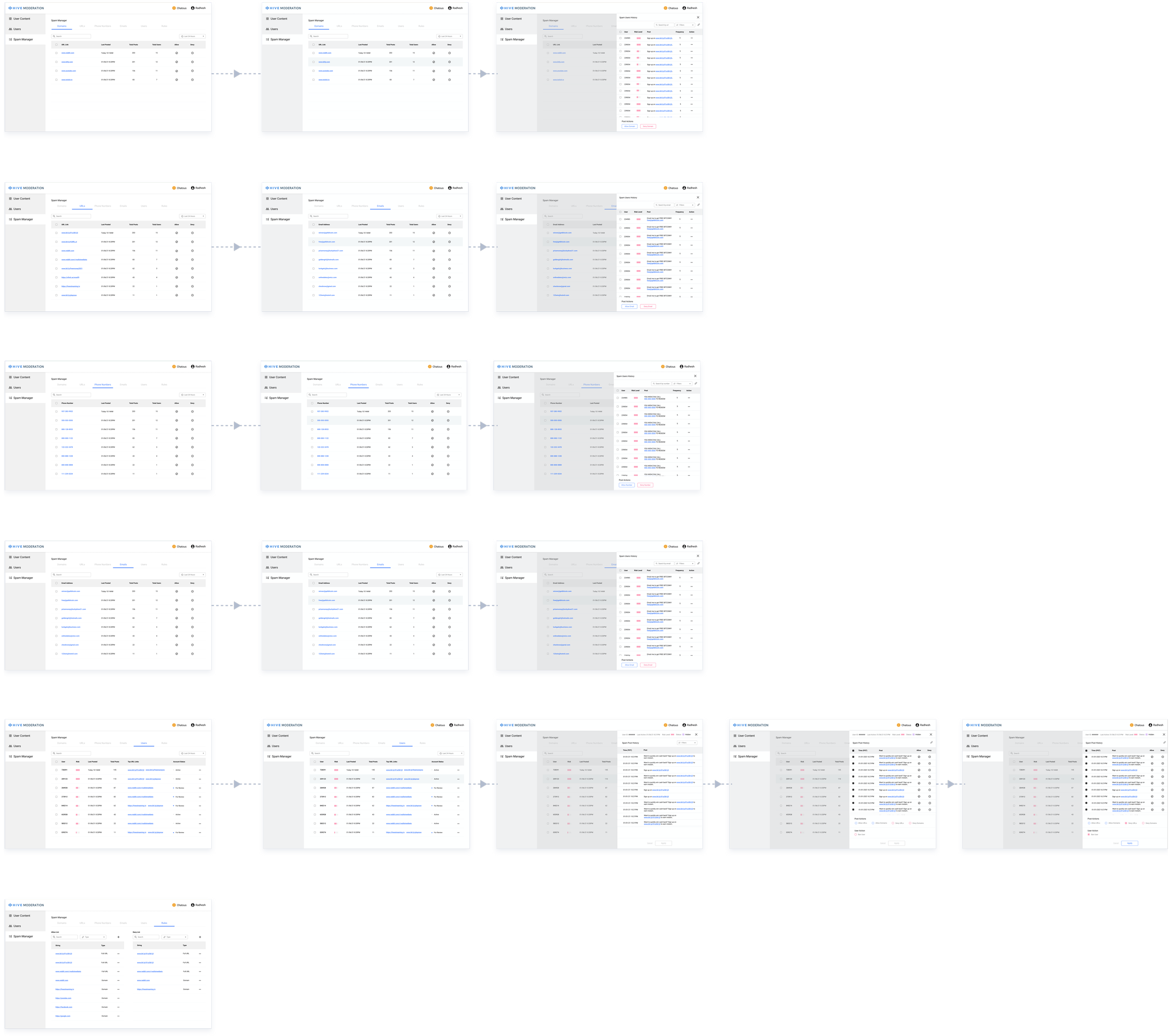

Process

I took a user-centered and highly iterative approach, starting with in-depth analysis of our user research results and closely collaborating with product and research teams to solve within our scope. From wireframes to high-fidelity prototypes, we aligned the dashboard’s functionality with user needs, refining it through continuous feedback and usability testing. Here are some examples of the variations of information architecture, layouts and user flows the team explored together:

Hi-fi designs & prototyping

I refined my low-fidelity screens to high-fidelity user interface screens and created a Figma prototype to test our designs with small customer feedback groups. Testing our prototype with users, we were able to gain new insights, identify issues and improve our product for final product development.

Example of flows users walked through in prototype:

Outcome

The project culminated in a fully functional V1 “spam” moderation dashboard, laying the foundation for future iterations. I worked closely with stakeholders to ensure final dev handoff was a smooth and collaborative process. With a robust, research-driven design, the dashboard is prepared to expand to a complete content moderation solution, accommodating various data types—text, images, video, and audio—and addressing broader content moderation needs outside of “spam”.

Feel free to explore the prototype of our current V1 design: